Built Trustworthy AI Support Workflows with Escalation Control

Client:

Confidential

Role:

Product Designer

Sector:

Enterprise Support SaaS

Year:

2026

Due to NDA constraints, product screens have been intentionally anonymized. This case focuses on decision logic, workflow design, and operational impact.

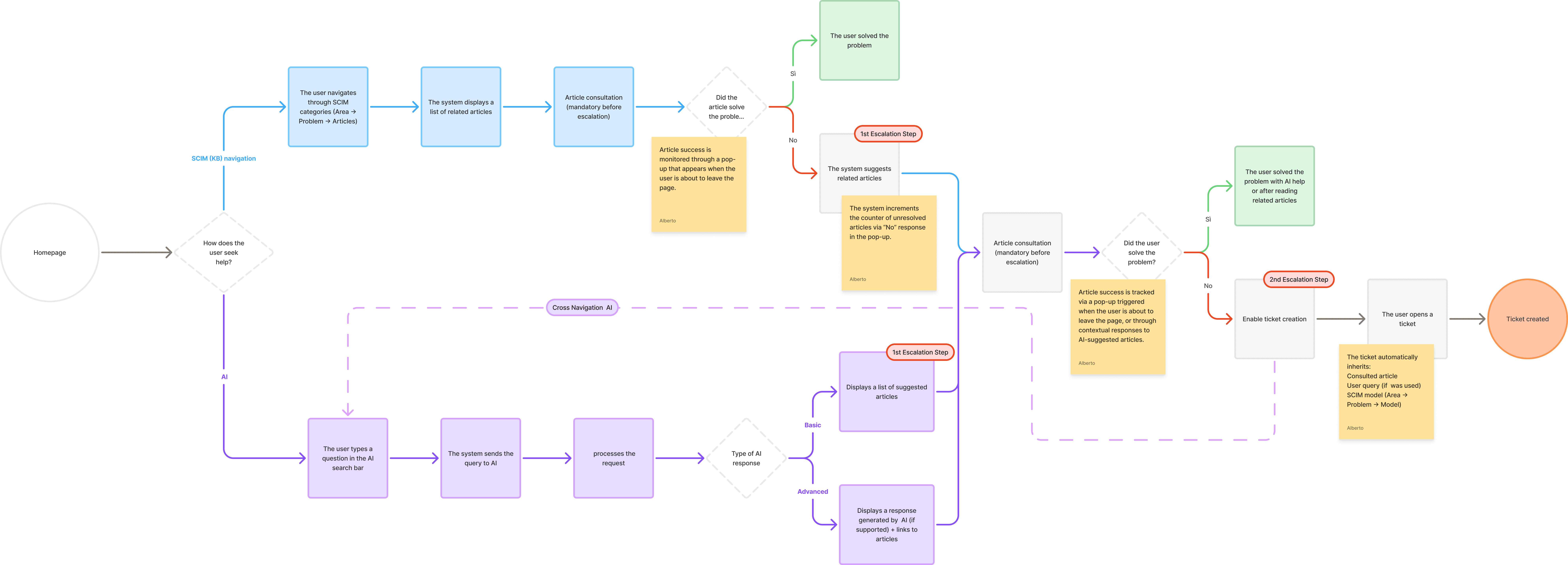

A new entry-tier support model limited default ticket access, so self-service quality became a business requirement. I designed an AI assistant inside the existing support workflow, with source-backed answers and explicit escalation checkpoints.

The goal was practical: resolve more issues in-product, and when resolution fails, route users to human support with clean context, clear eligibility, and predictable policy behavior across tiers.

The Core Problem

Support users needed faster answers, but speed without trust was risky. A chat-only model could produce fluent responses that looked correct even when evidence quality was weak. At the same time, the new tier model required controlled ticket access, so escalation behavior could not remain informal. Without clear checkpoints, users could get stuck between uncertain AI outputs and blocked support actions. The core problem was to improve resolution speed while preserving trust, traceability, and a reliable path to human support. We also needed consistent behavior across tenants with very different documentation quality.

Constraints

User constraint: people needed quick guidance with visible evidence before acting.

Business constraint: entry-tier plans required gated ticket access while premium tiers had broader rights.

Technical constraint: tenant knowledge quality varied, so answer reliability could not be assumed.

Operational constraint: escalation had to map to explicit workflow states, not open-ended chat drift.

Risk constraint: low-confidence states needed visible handling to prevent over-trust.

Key Decisions and Trade-offs

I designed the assistant as a support workflow component, not a separate chat destination. This kept behavior aligned with how teams already resolve issues.

I decided to embed AI at existing support entry points, because parallel interfaces split behavior, resulting in faster adoption and less context switching.

I decided every answer must expose source evidence in the same decision moment, because trust depends on immediate verification, resulting in better user judgment before action.

I decided to define escalation checkpoints tied to unresolved intent plus tier policy, because ticket enablement had to be controlled and explainable, resulting in predictable transitions to human support.

I decided to design fallback states with engineering from day one, because model limits are part of real product behavior, resulting in clearer low-confidence handling in production.

I decided to separate answer confidence from escalation eligibility, because confidence alone is not enough for policy decisions, resulting in fewer unnecessary escalations and fewer blocked high-need cases.

I decided to show escalation reason labels at checkpoint moments, because hidden policy logic creates user confusion, resulting in clearer expectations when tickets are enabled or restricted.

Alternative explicitly rejected:

Pure chat feed without evidence panel, because it increased speed but weakened verification discipline.

What I Owned

I owned trust and escalation interaction strategy: entry-point integration, evidence visibility, checkpoint logic, and fallback semantics. I aligned product and engineering on how tier policy should influence ticket enablement. I also defined decision rules for when the assistant should continue self-service versus when the workflow must expose escalation options, so teams could implement consistent behavior across tenants. I drove recurring design-engineering reviews to keep reliability decisions explicit as model behavior evolved.

What Changed in the Product/System

The system shifted from answer retrieval to evidence-based support decisions with explicit escalation control.

Assistant behavior moved inside core support flow instead of an isolated chat surface.

Source-backed responses became mandatory before high-impact user actions.

Escalation checkpoints linked unresolved intents to ticket enablement states.

Fallback states standardized low-confidence behavior across tenants.

Ticket handoff quality improved because escalation carried structured context.

In daily use, users moved from "ask and hope" to "check evidence, then decide." The workflow now shows when self-service should continue and when human support should take over.

Outcomes

Users resolved more issues without leaving the primary support flow because evidence was visible at decision time.

Trust increased because users could verify sources before acting, especially in ambiguous cases.

Tier-policy enforcement improved because ticket enablement was tied to explicit workflow states.

Support teams received cleaner ticket context after escalation, reducing re-triage effort.

What I'd Improve Next

Next iteration would add confidence calibration dashboards, intent-resolution cohorts, and escalation quality scoring by tenant. I would also tune checkpoint thresholds by issue type so routine cases stay fast while high-risk cases escalate sooner. That next iteration would tighten reliability and preserve usability as tenant complexity and model coverage grow. I would pair this with regular false-closure audits to catch hidden trust failures early.